At the end of last year, Google Analytics announced a new feature that will automatically exclude malicious bots and crawlers from website data. What are bots and crawlers, you may ask? Bots and crawlers collect content from the web. Malicious bots and crawlers interact with websites as though they are actual visitors but effectively skew traffic data.

Most bots don’t run the code on a website. However, some are designed to do just that! And these rascals can negatively skew data by artificially inflating bounce rates, decreasing time spent by users, skewing exit and entrance pages, and altering the sales funnel. Most importantly, websites could report conversion rates that are significantly lower if non-qualified traffic is factored and not removed.

Gathering Better Data

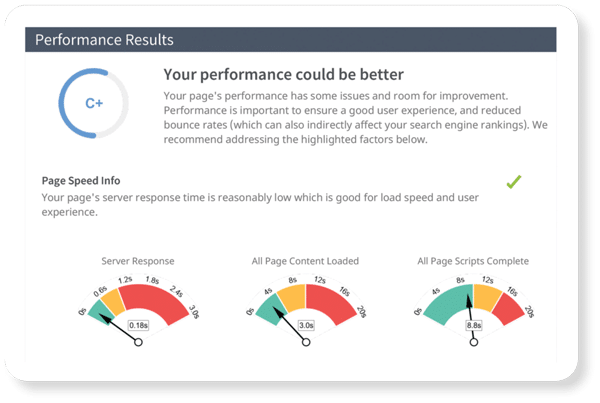

MyAdvice is dedicated to reporting accurate user behavior patterns. Since the announcement, our Digital Marketing team has kept an eye out for the best way to report real data regarding your website traffic. Such data includes conversion rates, bounce rates, user time spent, and visited pages, which will show improved statistics moving forward.

What to Expect

During the process of collecting more refined data, we’re expecting reported website traffic levels to seemingly drop (some more significantly than others, still some may see no change at all). Not to worry! Cutting out fake traffic is the first step in honing in on higher quality traffic. We also expect that conversion rates will increase as an end result.

MyAdvice is focused on showing how fostering higher conversion rates indicates a stellar return on investment for our services. Extra filtering as a part of our unrelenting pursuit to provide clients with the most accurate and transparent information possible will ensure informed digital marketing decisions.